NGINX Proxy Manager

What’s it all about?

My home server was just revolutionized! I’ve run several websites on my home network for years for testing purposes. Recently I was doing some work for hire and I needed to open them up to the wider internet. In the past I would just open up a bunch of port forwards and be happy.

Port forwarding: generally web traffic travels though various devices on a port 80 (http) or port 443 (https). You can open up other ports on your router and forward them to specific devices e.g. external traffic sent to http:macblaze.ca:8083 —> internal route 192.168.1.250:80

This results in opening a bunch of ports on your router (insecure) and having to give clients and others oddlooking urls like macblaze.ca:8083.

And recently Shaw has upgraded their routers to use a fancy fancy web interface that actually removes functionality in the name of making things easier. So my linux server, which had a virtual NIC (network interface card) with a separate IP, didn’t show up on their management site and I was unable to forward any external traffic to it.

But up until this week it was a c’est la vie sort of thing as I struggled to try and figure out how to get the virtual NIC to appear on the network. And then I saw this video about self hosting that talked about setting up a reverse proxy server.

NGINX Proxy Manager

Find it here: nginxproxymanager.com

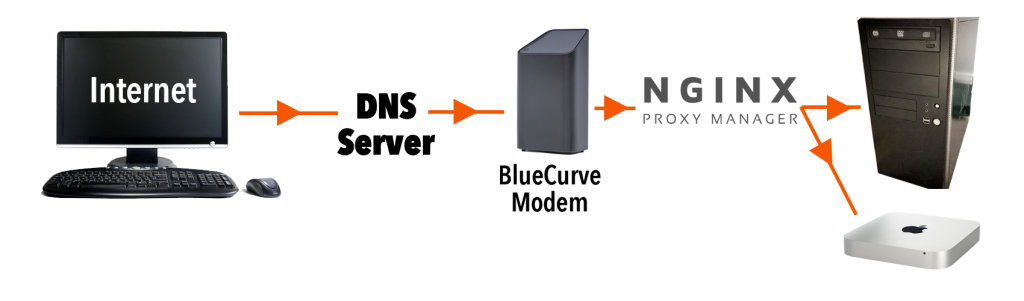

Turns out this was what I was supposed to be doing all along. A reverse proxy senses incoming traffic and routes it not via the port but by the dns name. So now that I have it set up I can just add a CNAME to my dns setup like testserver.myserver.com and it will send it to my home IP on the normal port 80. My router lets it through, passes it to the proxy server which then parses the name and then sends it on to the proper machine/service. So then whenever I set up a new project I can go and add testserver2.myserver.com and the proxy server will send it to where it belongs on my internal setup.

So cool.

My Set Up

I used to have some ports going to my Mac mini server and some ports to my Linux machine. Now all traffic is directed to the linux box. It runs NGINX Proxy Manager (NPM) on a Docker container and receives traffic on port 80. I moved the two websites hosted on that box to ports 8090 and NPM now sorts them based on the various CNAMEs I added to my hosting.

CNAMEs

CNAMEs are canonical names — akin to forwarding in a weird way. www.macblaze.ca is a CNAME for macblaze.ca. So if for some reason the IP address changes for macblaze.ca then www.macblaze.ca will still go to the right place. If I set up a domain myserver.com which points to the IP that is assigned to our house by our ISP (Shaw, Telus etc.) I can then set up the CNAME testserver.myserver.com which will be handled internally. If our IP ever changes (which it used to do quite often) now I only have to change the one record and all the CNAMES will still work.

Docker

Docker is a virtualized container system. I haven’t a lot of experience with it but this iteration of the NGINX proxy is a GUI based implementation of the command line version and the developer decided to set it up as container (sort of a mini virtual computer) so he could easily roll out updates as necessary. So my poor old Linux box is now running virtualized software on top of being a web server and a linux sandbox. Not bad for something from 2009. I will start playing a bit more with docker because it allows you to build a container and implement it with all sorts of things without affecting the main machine and, best of all, be able to throw out any changes and start again. we will see if the old PC is up to it or not.

I also installed docker-compose in order for Docker to run “headless” in the background.

Here’s a good video on the process:

The Process

Docker

(From the video)

Update the Linux system:

– sudo apt update

– sudo apt upgrade

– sudo apt install docker.io

Start

– sudo systemctl start docker

– sudo systemctl enable docker

– sudo systemctl status docker

Check to see if its working by checking the version: docker -v

Then test by installing a test container:

– sudo docker run hello-world

Docker-Compose

sudo apt install docker-compose

To verify: docker-compose version

Then check permissions:

– docker container ls

If you are denied:

– sudo groupadd docker

– sudo gpasswd -a ${USER} docker

– su - $USER

NGINX Reverse Proxy

Make a directory (make sure you have permissions on it)

sudo mkdir nginx_proxy_manager

I had to change permissions. Then create a file in the directory:

nano docker-compose.yaml

Copy the setup text from https://nginxproxymanager.com/guide/#quick-setup and change passwords

- Be sure to change the passwords

Then compose:

– docker-compose up -d

This grabs the specified docker containers, sets up the program and database and creates the virtual machine that is running the NGINX Reverse Proxy server.

You should be able to access the GUI at [http://127.0.0.1:81]

Set up

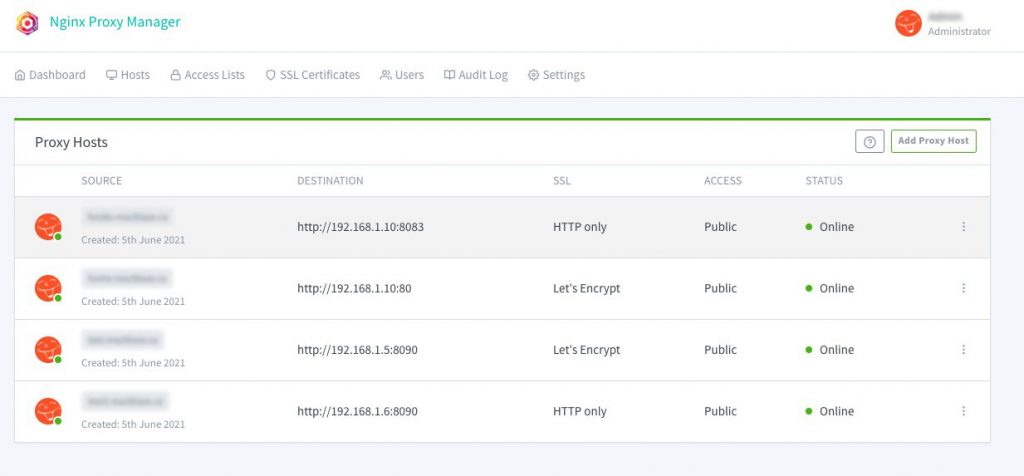

At this point it is a simple matter of adding a proxy host. Be sure to take advantage of the free SSL offered through Let’s Encrypt ( a non profit Certificate Authority).

- click add proxy host

- Add domain name (the CNAME), IP to forward it to and the port

- Go to SSL tab

- Select “Request a New Certificate” from the dropdown

- Select Force SSL (this will auto forward all http requests to https), agree tot eh terms and add a contact email

You should be good to go. Go ahead and add as many proxies as you have CNAMEs and servers.

Remember

And remember to close down all the ports on your router if you’d been like me and opened a bunch. Now you should only need 80 (http) and 443 (https).

Like I said—it’s been life changing for organizing my environment.